Dear RLC Members and Supporters,

Your engagement, your energy, and your commitment to defending liberty made this one of the strongest gatherings in our organization’s history. Congratulations to all of our newly elected officers who will help lead the Republican Liberty Caucus into the crucial battles ahead.

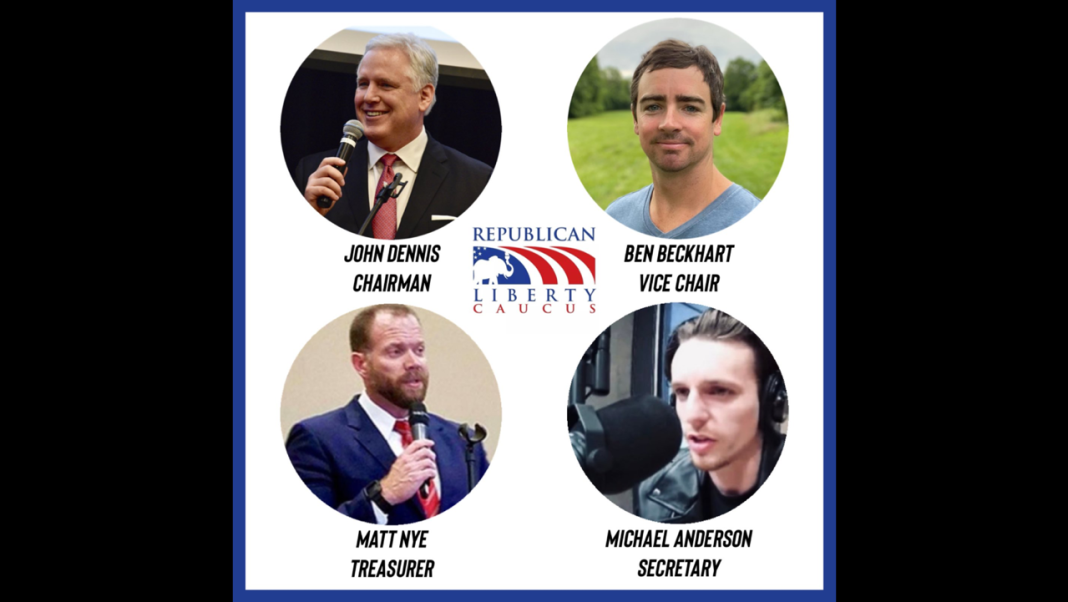

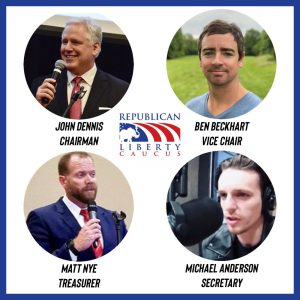

National Officers

National Officers

Chairman: John Dennis (@RealJohnDennis)

Vice Chair: Ben Beckhart (@Ben_Beckhart)

Treasurer: Matthew Nye (@matthewdnye)

Secretary: Michael Anderson (@HarviiLee)

Regional Directors

Atlantic: Dr. Irene Mavrakakis (@IreneMavrakakis)

South East: Sharon Regan

Great Plains: Mike Franco

South Central: Jeff Hutt

South West: Bill Brown (@billbrown)

At-Large Board Members

Russ Hryzan

James Peinado (@wstxag08)

Bob Sutton

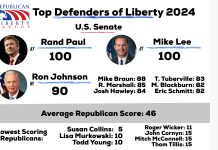

We also extend our gratitude to the tremendous speakers who joined us, including Rep. Thomas Massie, Rep. Warren Davidson, and Senator Rand Paul — champions of constitutional liberty whose leadership continues to inspire our movement.

Throughout the convention, attendees heard powerful discussions on spending restraint, decentralization, free markets, constitutional carry, civil liberties, and restoring limits on federal power.

From the fight to repeal the PREP Act and end federal propaganda, to expanding the PRIME Act, auditing and ultimately ending the Federal Reserve, repealing the Department of Education, strengthening War Powers authority, and pushing for the national vote on releasing the Epstein files — the message was clear:

We will keep fighting for liberty with conviction, transparency, and courage.

This year’s convention reaffirmed that the Republican Liberty Caucus is growing, energizing the grassroots, and positioning itself to be a principled force within the broader Republican Party.

As the political landscape continues to shift, the RLC will stand firm for the values that built this movement: limited government, individual rights, free markets, and constitutional governance.

Thank you again for your dedication. Together, we will make 2025 a defining year for liberty.

In Liberty,

John Dennis

Chairman, Republican Liberty Caucus