MELBOURNE, FL – While the RLC supports President Trump, we do not agree with tonight’s strikes.

- This move has exponentially increased the threat to Americans in the US and around the world.

- The law of unintended consequences is undefeated. After the debacles in Iraq, Afghanistan, Libya, and Syria we know that kinetic action rarely turns out as planned.

- The 1973 War Powers Act makes it clear that the president may only authorize such strikes a) when the U.S. is under imminent threat, and b) to defend American interests. There was no imminent threat from Iran, and no American interest has been defined.

Under any other circumstance the Constitution is clear that the war power resides with Congress.

The American people elected President Trump, in part, because his antiwar disposition and master negotiating skills offered the prospect of a peaceful and prosperous America.

Tonight’s bombing of Iran contradicts President Trump’s peace instinct, and we hope the opportunity remains for him to employ his negotiating skills toward a resolution without further military engagement.

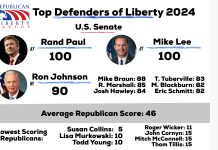

About the Republican Liberty Caucus

The Republican Liberty Caucus is a 527 voluntary grassroots membership organization dedicated to working within the Republican Party to advance the principles of individual rights, limited government, and free markets. Founded in 1991, it is the oldest continuously operating organization within the Liberty Republican movement.